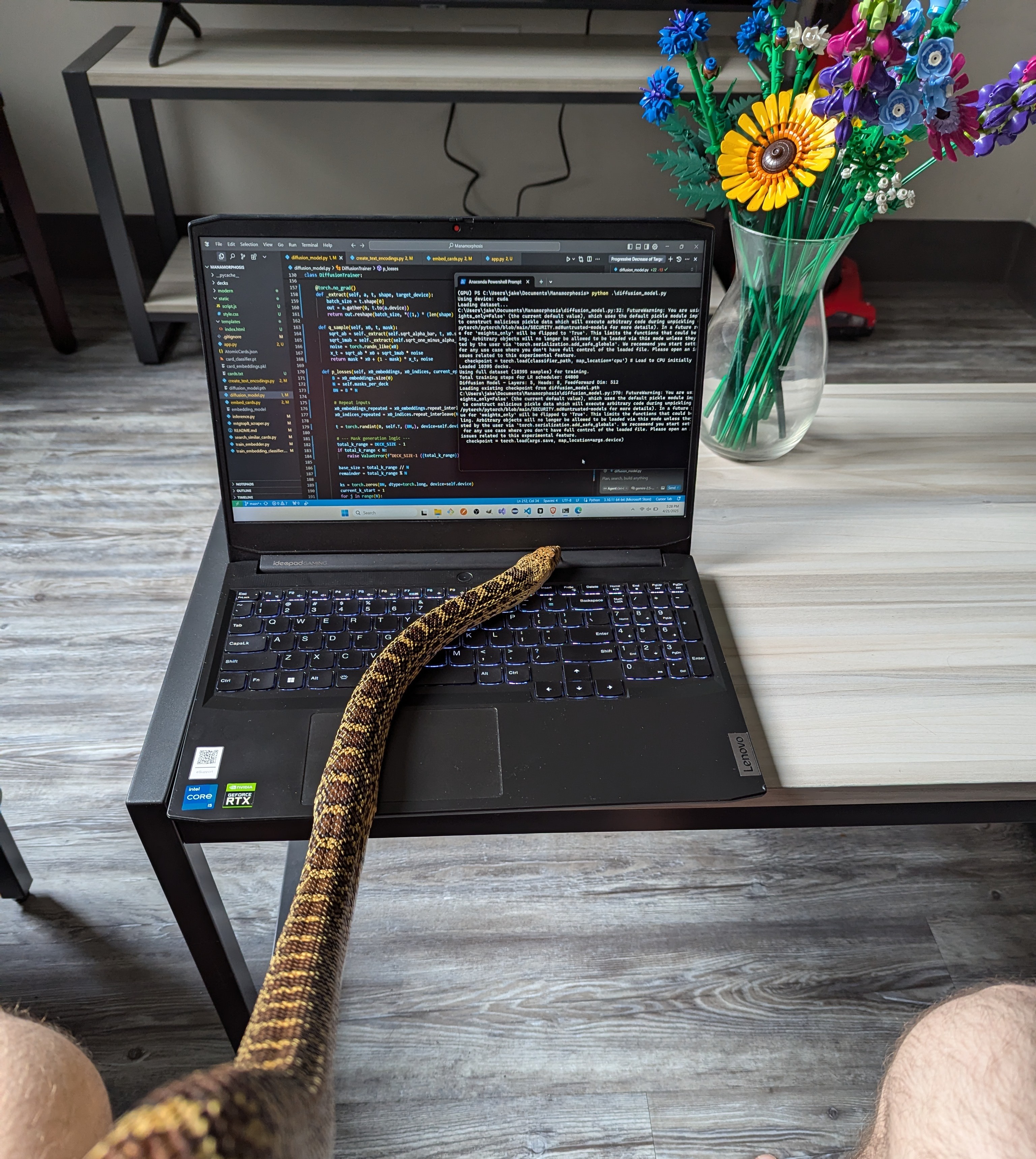

My name is Jake and I have a snake. Currently at Tzafon, previously Endeavor, and before that I studied computer science and math at NC State, which I left senior year to move to San Francisco. I post here occassionally to share thoughts and projects.

Research Interests

Evals. If what gets measured gets improved, then the starting point for developing any new capability is to make a benchmark for it. I believe this is one of the highest-leverage ways to drive progress.

I also maintain a capabilities index of ones which I think are valuable.

Harnesses. Models are only useful if you can apply them. There’s a lot of low-hanging fruit here.

A few of my favorites:

- RuneBench. AI writes code to play RuneScape

- Remote Labor Index. CUA harness for measuring progress towards full automation of standard online freelance work. Still very unsaturated, no model gets above 5%

- Kosmos. This one helped with research for the genetic engineering project below

Reinforcement learning. A the combination of the above. Make a harness to do a task, an evaluation to measure the output quality, and now you have an RL environment.

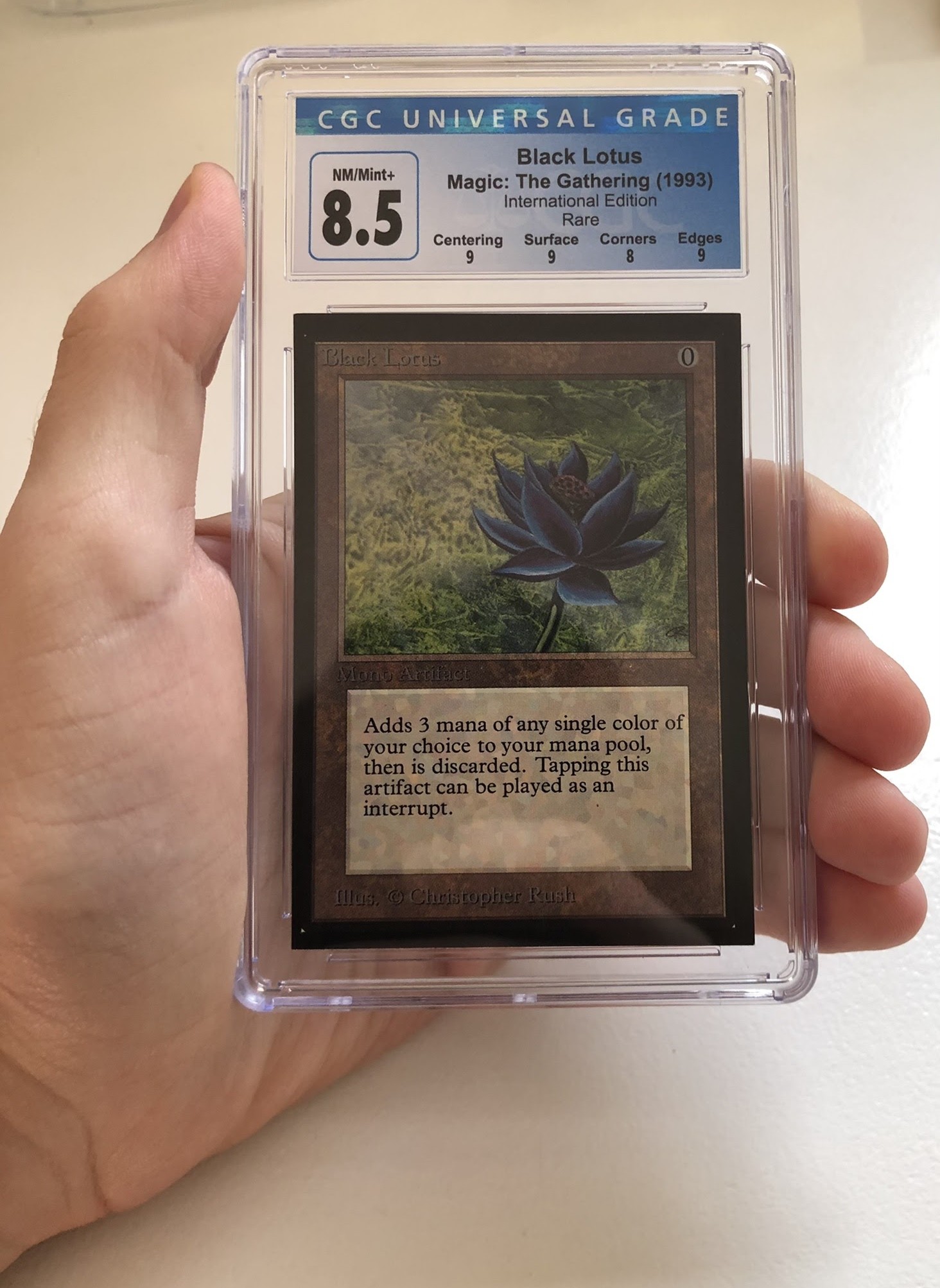

My current pet project: engineering a real-life Black Lotus. There are two primary motivations here:

- It’s cool and I want one

- I now have an excuse to train some novel DNA sequence architectures

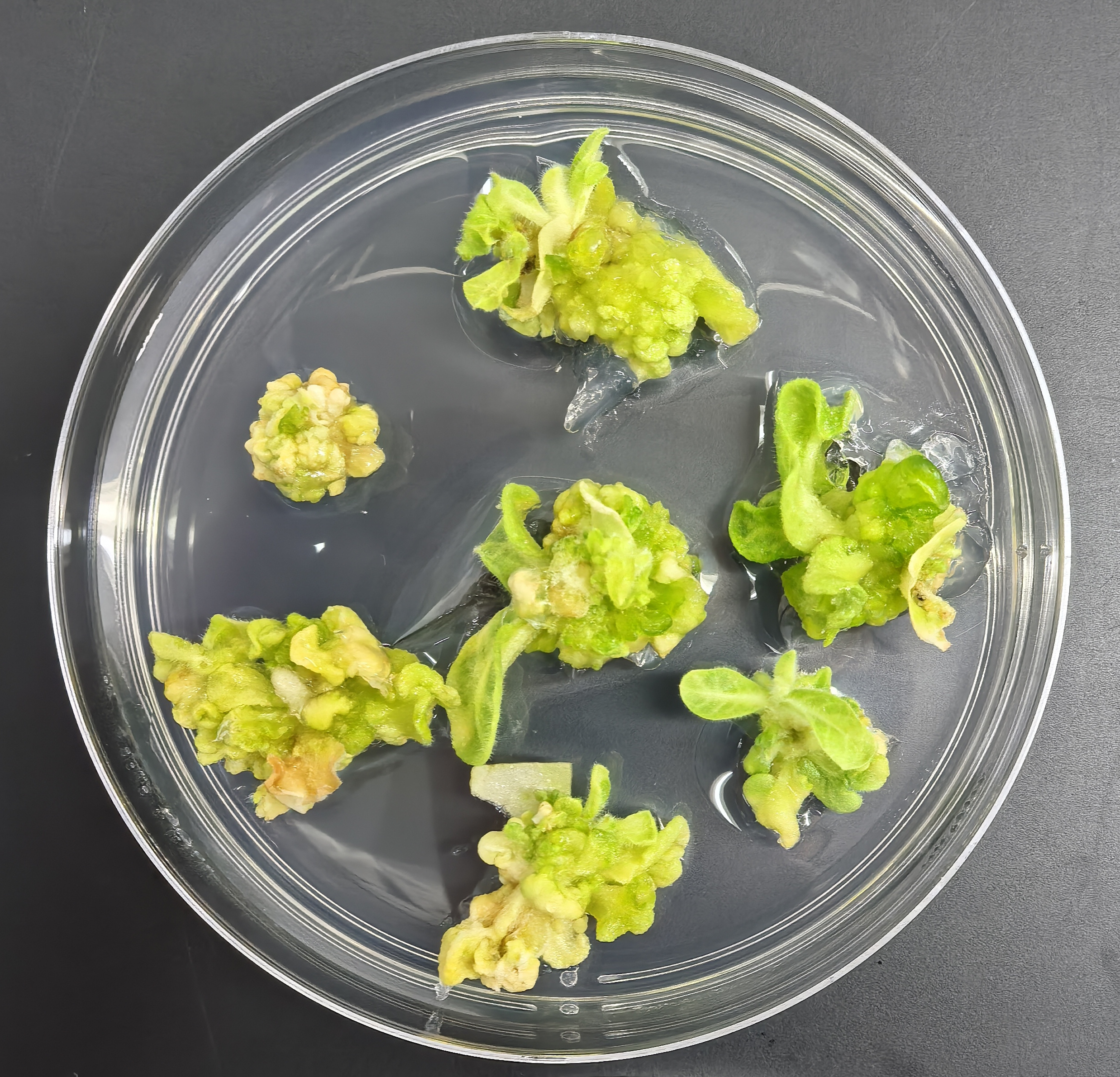

My initial approach combines a couple of genes to boost anthocyanin production in the petals and I’m paying a lab to carry out the experiment. I’ll post more about this as results arrive over the summer.

MAY UPDATE: the plants survived the initial transformation and the pigment doesn’t appear to be leaking into the wrong tissues. This is a big win. The next test will be if they flower and show any color at all.

Gallery